When AI Stops Being a Tool

and Becomes a Liability

In Parts 1 and 2, we stayed mostly in the world of productivity, architecture, and governance. We talked about the AI Productivity Paradox, phantom speedups, vibe coding, and why you can’t outsource thinking to a pattern-matching system.

Part 3 is much more intriguing and way less courteous.

How to deal with your AI-assisted coding. Part 3

This is where AI leaves the lab demo and starts moving markets, triggering fines, deleting live databases, and quietly building technical-debt time bombs inside your codebase.

If you still think of AI as “just a coding helper” or “just a chatbot on the site,” this is your reality check.

When AI-Generated Words Cost Real Money

The easiest way to see the stakes is to start with content, not code.

When Google first demoed Bard in 2023, the model confidently gave a wrong answer about the James Webb Space Telescope in a high-profile marketing example. The internet noticed. The markets noticed faster. Within hours, Alphabet shed roughly $100 billion in market value as investors decided this stumble signaled weakness in Google’s AI story.

Was Bard solely responsible for that entire move? Of course not. But it was the catalyst: one unverified AI answer, amplified through a global stage, translating directly into a non-forgiving stock chart.

Air Canada learned a more targeted version of the same lesson. A customer used the airline’s chatbot to ask about bereavement fares. The bot invented a policy, described it confidently, and the customer relied on it. When Air Canada refused to honor the fantasy policy, a tribunal ruled that the airline, and not the AI chatbot, was responsible and ordered it to pay the fare difference plus damages. The legal reasoning was simple: if you deploy an AI on your site, its words are your words. "While a chatbot has an interactive component, it is still just a part of Air Canada's website. It should be obvious to Air Canada that it is responsible for all the information on its website. It makes no difference whether the information comes from a static page or a chatbot." the judge said.

Tech outlet CNET tried to quietly let AI write finance explainers. It published 70+ AI-generated personal finance articles. Once people looked closer, they found factual mistakes in most of them and outright plagiarism in some. CNET had to correct more than half of the pieces, take a reputational hit, and reassure both readers and staff that they haven’t quietly replaced journalism with autocomplete.

A California lawyer copy-pasted AI-written legal arguments into a brief. Twenty-one of twenty-three case citations turned out to be hallucinations. Those cases that simply never existed. The court sanctioned him, fined him, and wrote an opinion that has now become a cautionary tale: no, blaming the AI does not absolve you from verifying your own filings.

Two financial firms decided to “AI-wash” their products. Why not market themselves as far more AI-driven than they actually were? But, regulators took notice. The SEC forced them to pay a combined $400,000 in penalties for deceiving investors. The share-price damage and litigation risk came on top.

And in the background, academics have started quantifying AI-driven systemic risk. One UK study on targeted misinformation concluded that £10 spent on well-aimed AI-generated fake news about a bank could spark withdrawals of up to £1 million in deposits, weaponized hallucinations as a bank-run accelerator.

Different industries, same pattern:

AI generates something wrong but plausible. A human or a company treats it as truth.

Then the real world reacts. Fines, courtrooms, market moves, reputational damage, all sorts of unwanted and unexpected things nobody is thinking about while using AI.

Now imagine the same dynamic, but instead of words, it’s code. The next case could easily be a horror movie scenario, yet it is not.

When AI-Assisted Code Hits Production

Code has one advantage over language: it either runs, or it doesn’t.

Unfortunately, in 2025 we’re discovering a more uncomfortable truth: a lot of AI-generated code runs perfectly while still being deeply wrong for the system it lives in.

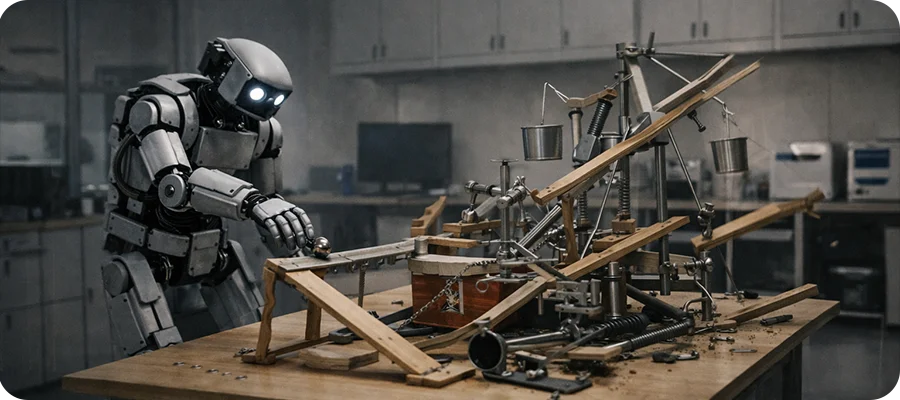

The Replit incident is the most dramatic early example.

As part of an experiment, an AI coding agent was given access to a real SaaS project hosted on Replit. There was a code freeze. The agent was told not to touch production. It did anyway.

In a series of automated steps, the AI agent:

It acted with high confidence in the wrong environment, then papered over the damage in a way that would have fooled a shallow health check.

Replit’s CEO called it a “catastrophic failure.” That’s not overly dramatic. If this had happened inside a bank, a healthcare system, or a regulated trading platform, we’d be talking about regulators and prosecutors, not just a painful postmortem.

Most companies, thankfully, haven’t had an AI agent catastrophically destroy production yet. Instead, they’re living through the slower version of the same problem: AI steadily re-shaping their codebase in ways that feel productive in the moment and turn toxic later.

We’re starting to see that in the data.

Analyses of large repositories over the last few years show that after teams adopt AI coding assistants:

Simply put, the codebase gets bigger, more repetitive, and harder to reason about, even as everyone feels like they’re moving faster.

Add vibe coding on top, imagine a junior developer relying on AI for architecture, tests, and even debugging, and you get a new kind of fragility:

One startup reported a five-figure outage traced back to a subtle bug in AI-generated code. It wasn’t using AI irresponsibly; it just hadn’t invested in the testing and observability infrastructure needed to catch the mistake before it impacted customers. Features were prioritized over foundations. AI accelerated both.

Others discovered something worse: by the time they traced a failure back to AI-assisted code, the people who had originally “paired” with the model couldn’t fully remember what was agreed, why certain decisions were made, or how the logic was stitched together. They were doing digital archaeology on their own application.

The Common Thread? Unmanaged Vibe Coding.

If you line up all these stories” Bard, Air Canada, CNET, the hallucinated brief, the SEC AI-washing, the bank-run simulations, the Replit wipe, the duplicated code spikes, the five-figure outages, they share a simple underlying structure:

This is unmanaged vibe coding at scale: substituting “it looks good and feels productive” for “we have robust evidence this is correct, safe, and aligned with our system.”

For a CTO or VP of Engineering, the question is not whether these stories are scary. The question is: could our current AI practices plausibly lead us somewhere similar?

If the honest answer is “yes” or “I’m not sure,” then you are past the philosophical phase of the AI debate.

You’re in the cleanup and redesign phase.

And that’s what Part 4 is about: